7 Ways Data Quality Failures Derail AI Projects – And How to Spot Them

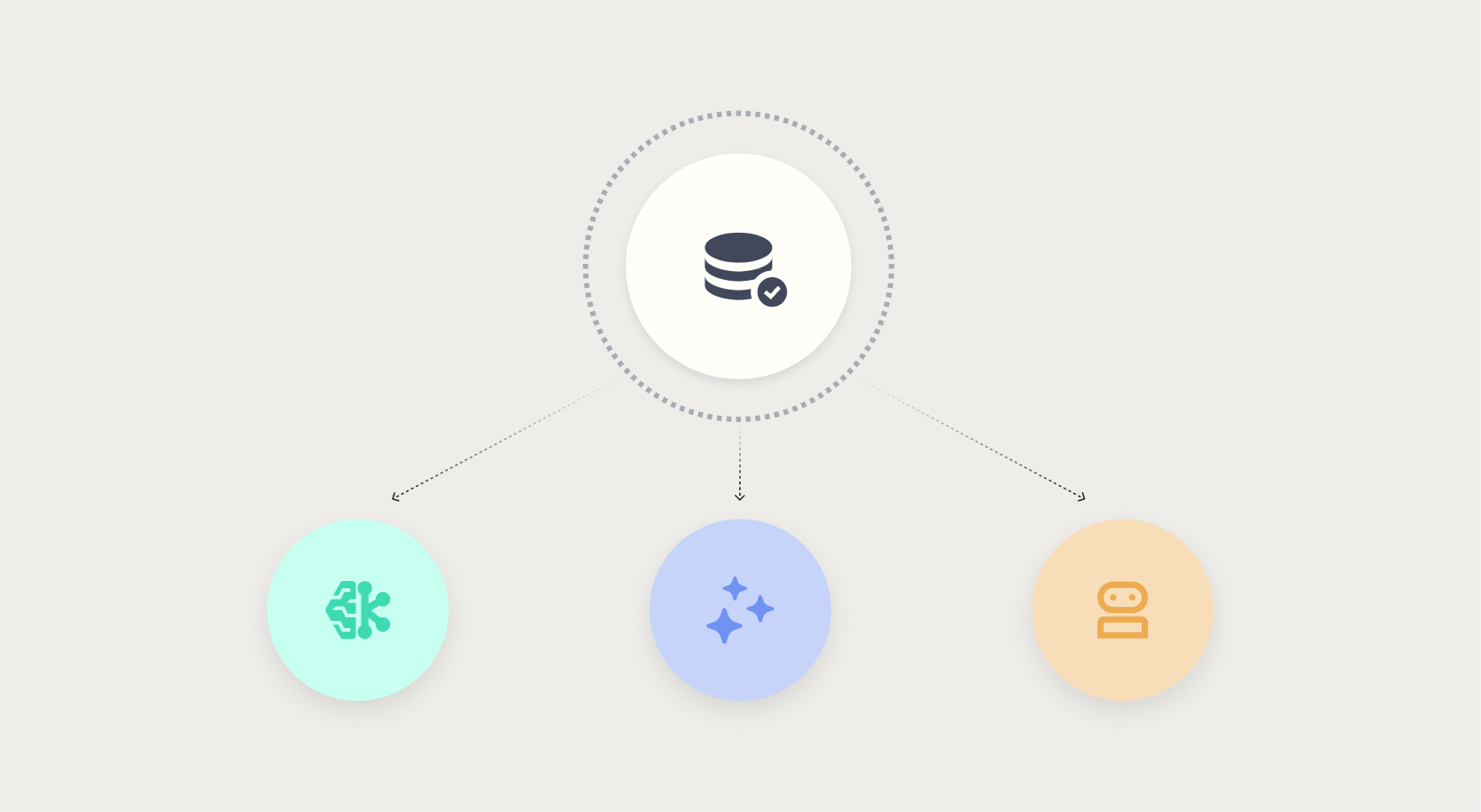

Nobody builds bad models on purpose. Yet poor data quality is the silent culprit behind stalled, drifting, or silently failing AI projects. Whether it’s a traditional machine learning model, a generative AI chatbot, or an autonomous agent acting on incomplete data, the consequences can be severe—ranging from a $2.3 million margin shortfall to confident wrong answers that erode customer trust. As AI moves from prediction to action, the tolerance for data quality failures shrinks, and detection becomes harder. In this listicle, we’ll explore seven critical ways data quality impacts AI, why the risks escalate with generative and agentic systems, and how to recognize hidden failures before they cause damage. Traditional ML failures are visible but costly; generative AI and agentic AI break the containment entirely.

1. Traditional Machine Learning: Visible but Costly Failures

In classic machine learning, data quality issues often surface through dashboards, anomalies, or analyst reviews. A pricing model might ship a $2.3 million margin shortfall because training data had systematic errors—say, outdated cost figures or mislabeled transactions. At least these failures are visible: the dashboard shows a wrong number, someone catches it, and the model is retrained. The damage is contained, but it still hurts budgets and timelines. The key lesson is that even in relatively transparent ML environments, poor data quality can lead to significant financial loss before corrective action is taken. The visibility doesn’t prevent the problem; it only helps after the fact.

2. Generative AI: Confident Wrong Answers with No Warning

Generative AI systems, like chatbots pulling from stale knowledge bases, produce outputs that look authoritative but can be completely incorrect. Unlike ML dashboards that flag numerical errors, a chatbot delivers a confident wrong answer with no internal signal that something is amiss. For example, a customer service bot might tell a user that a product is available when it’s discontinued, based on outdated data. The AI operated exactly as designed—on data that was never fit for purpose. This invisibility makes generative AI especially dangerous: errors propagate seamlessly, and organizations often discover them only after widespread damage to reputation or operations.

3. Agentic AI: Autonomous Actions on Incomplete Data

Autonomous agents take data quality failures to a new level. Consider a procurement agent that commits budget based on supplier data missing crucial price updates. The agent acts swiftly, without human review, and the mistake is irreversible. The damage is not just a wrong output—it’s a real-world action with financial and operational consequences. Agentic AI systems amplify data quality issues because they operate on trust: they assume data is correct and act upon it instantly. The cost of poor data here is not just a retraining cycle but actual, often expensive, errors in the field.

4. The Steep Cost of Delayed Detection

In all AI types, the later a data quality flaw is caught, the more expensive it becomes. The $2.3 million margin shortfall mentioned earlier could have been avoided with early data checks. In generative and agentic systems, the delay between data error and detection can be even longer because there’s no inherent feedback loop. A chatbot may spread misinformation for weeks before a customer complaint triggers an audit. By then, the brand damage is done. The hidden nature of these failures means organizations often underestimate the total cost, focusing only on immediate fixes rather than root cause prevention.

5. Why Generative AI Data Quality Is Especially Hard

Generative AI models rely on large, often unstructured knowledge bases that are frequently updated—or not. Stale data is a prime culprit. Unlike traditional ML datasets that have defined schemas, knowledge bases for chatbots can include outdated articles, biased content, or contradictory information. The model cannot distinguish between current and obsolete data. This makes “fit for purpose” harder to define and enforce. Organizations must implement rigorous data freshness checks, version control, and automated validation pipelines to ensure generative AI outputs remain trustworthy.

6. The ‘Fit for Purpose’ Trap

A common phrase in data quality is “data was never fit for purpose.” This applies when teams use data that was collected for one purpose (e.g., reporting) to train AI for another (e.g., real-time decision-making). The data may look clean in its original context, but it lacks the attributes, timeliness, or granularity needed for the AI task. For example, sales data used to train a pricing model might include returns that skew margins. Recognizing this mismatch early is crucial. Organizations must assess data lineage and context before handing it to any AI system.

7. Moving from Prediction to Action: Less Tolerance for Error

The further AI moves from passive prediction to active decision-making, the less tolerance there is for data quality failures. A predictive model that forecasts demand a little off can be corrected. But an autonomous agent that places orders based on that forecast causes real inventory issues. The errors compound. Detection becomes harder because actions are often irreversible and lack the immediate feedback of a dashboard error. As AI agents become more common, organizations must embed data quality checks into the action pipeline itself, not just the training phase.

Data quality is not a one-time fix but a continuous discipline. The evolution from ML to generative AI to agentic AI demands proactive validation, contextual awareness, and automated monitoring. Without it, even the best-designed models will fail silently, leaving a trail of costly mistakes. Understanding these seven failure modes is the first step toward building AI systems that are not only powerful but also trustworthy.